Why Service Level Planning Collapses in a Chat-and-Message World

A GZP Field Guide

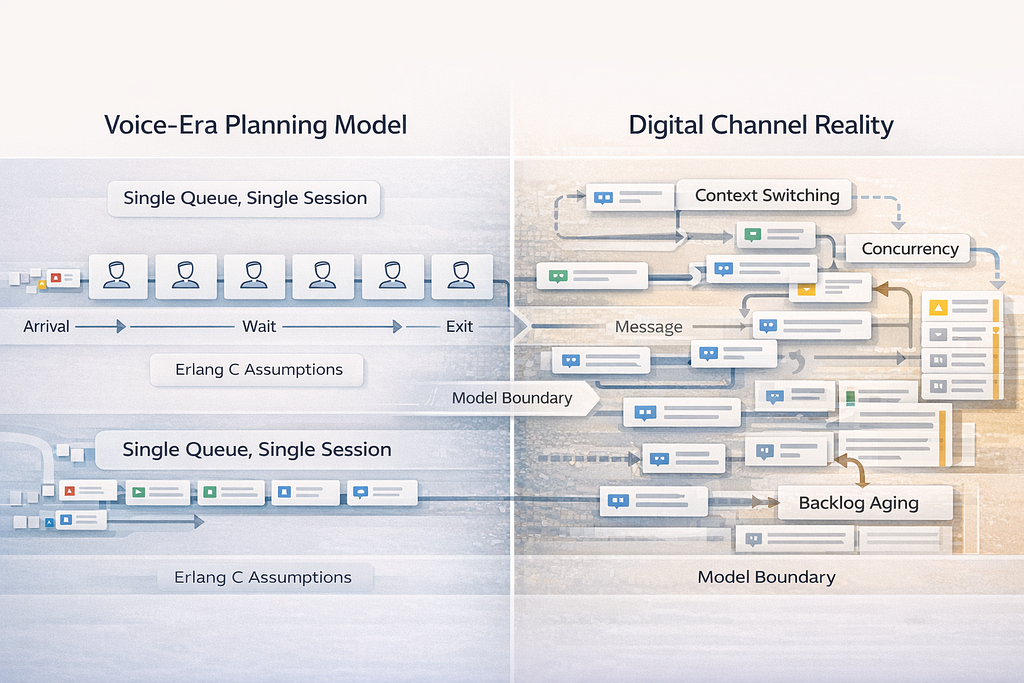

Traditional queue assumptions hide the true constraint: attention, context switching, and aging backlog.

TL;DR

- Erlang C was built for a single real-time queue where one agent handles one interaction end to end. That shape is not most centers anymore.

- Chat and messaging add concurrency and context switching, so “average handle time” stops describing capacity.

- Email and case work behave like inventory, not a line, so interval-based queue math under-plans backlog aging.

- Converting digital into “voice equivalents” creates false confidence and brittle schedules.

- Modern planning works better when it splits real-time queues from backlog queues, then uses channel-specific promises and intraday controls.

The problem is not Erlang C. The problem is the job being handed to it.

Erlang C earned its place because it did something valuable. It connected forecast volume, average handle time, and staffing into one shared planning language. That language let leaders talk about service level, occupancy, and schedule coverage without having to debate the entire system every week. That worked when most work looked like voice.

Most work does not look like voice anymore.

Modern contact centers run blended demand: voice, chat, messaging, email, in-app tickets, and social. Customers switch channels mid-journey. Agents handle multiple conversations at once. Some work is truly real-time. Some work is a backlog that can age across days. Some interactions are not single sessions at all. They are threads that pause and restart.

Erlang C is a queue model designed for a particular shape of work. When the work changes shape, the model can still produce a number, but the number stops being a reliable control. That is the failure pattern most leaders recognize. The staffing requirement looks precise. The plan looks reasonable. Then performance falls apart in production. The reaction becomes overtime, shrinkage crackdowns, and constant reforecasting.

The issue is not math. The issue is a mismatch between a voice-era model and a digital-era operating system.

Digital channels change the unit of work

Traditional planning measures contacts per interval. That framing assumes each contact arrives, waits, gets handled, and exits.

Digital channels break that tidy life cycle.

A chat is rarely a single block of continuous agent effort. It has bursts, pauses, and returns. Messaging threads can stretch across hours or days. Email cases can require multiple touches, waiting on the customer, the merchant, or a back-office step.

In these channels, “arrival rate” is not just new demand. It is also re-entry demand.

When someone waits too long on chat, they do not always abandon quietly. Many retry. They open a second session. They move to voice. They post in social. These are not new issues. They are the same issue chasing closure through different doors. Traditional queue planning often treats that as rising demand rather than avoidable repeat demand.

Once repeat demand enters the system, planning gets harder. The center becomes a demand amplifier. Slow response increases retries. Retries increase volume. Volume increases delay. The loop tightens.

This is why digital service can feel stable for weeks and then suddenly tip. The tipping point is not volume. It is retry behavior.

Concurrency is not free capacity. It is risk.

Many operations try to adapt by forcing digital channels into voice-like equivalents.

Chat contacts are counted. Concurrency is applied as a factor. Handle time is adjusted into an “effective AHT.” Then Erlang C runs and produces a staffing requirement. This approach looks clean. It also hides the real constraint.

The constraint is not minutes. The constraint is attention.

Concurrency is safe only when the work is simple, tooling is fast, and customers respond at a predictable pace. Under stress, concurrency collapses. Customers type faster, follow up more, and paste more context. Agents spend more time re-reading and re-orienting. The context-switching tax rises. Quality slips. Escalations rise. Rework rises. Then the center is forced to reduce concurrency to protect quality, right when volume is highest.

A static concurrency factor cannot capture that stress response. It turns a live operating dial into a fixed assumption. That is how schedules become brittle.

Concurrency can still be valuable. It just needs to be managed like a control with guardrails, not treated like a permanent capacity boost.

Backlog channels are inventory problems, not waiting-line problems

Email and many case queues behave like inventory. Work arrives and sits until capacity works it down. The backlog can grow across days. It can also be “hidden” because customers are not always actively waiting in a visible line.

Erlang C is built for a line that clears within an interval. Backlog work does not clear within an interval. It carries.

That difference changes what “good” looks like.

A real-time queue is governed by wait time distribution. A backlog queue is governed by volume, age, and clearance rate. Planning a backlog requires burn-down targets, aging thresholds, and capacity that can flex day to day. It also needs a clear promise. Customers tolerate slower email response when the answer resolves the issue and when the timeline is clear. Customers do not tolerate silence.

When backlog is planned with real-time math, two things happen. Either the center overspends trying to make email behave like chat, or it under-plans and backlog ages until it turns into a customer trust problem.

Both outcomes are common. Both look like execution failure. Both are planning design failures.

The model assumptions do not match the routing reality

Traditional Erlang C assumes identical servers. Modern centers are not identical servers.

Most centers run skill-based routing. Some agents are trained for billing disputes, some for technical troubleshooting, some for regulated flows, some for enterprise accounts. Even within one agent group, proficiency varies. Channel proficiency varies too. A strong voice agent is not always a strong chat agent. A strong chat agent may struggle in messaging threads that require careful written tone and long-lived context.

This creates bottlenecks. One missing skill can choke the whole operation.

A headcount plan that looks sufficient in total can still fail because the scarce skill is under-covered. Erlang C can produce a number that looks right, while the scarce queue burns and the general queue stays calm. The floor then experiences chaos. The spreadsheet shows “adequate staff.”

Skill-based routing is not a detail. It is the operating system. Workforce planning needs to respect it.

Service level targets imported from voice create bad incentives

Voice service level targets were designed around customers actively waiting in a queue. That model pushed fast answering and high accessibility.

Digital channels need different promises.

In chat, time to first response matters, but so does response pacing inside the conversation. A fast hello followed by long gaps feels worse than a slightly slower hello followed by steady progress.

In messaging, the thread can last hours. The promise is not “instant.” The promise is “reliable updates and clear ownership.”

In email, the promise is an SLA window and a path for exceptions.

When one voice service level is applied to every channel, incentives distort:

- backlog channels get starved because “real-time” targets dominate

- agents rush first responses to hit a metric and create longer resolution times

- intraday teams chase the wrong dial and miss the real constraint

Digital channels require separate control of first response, ongoing response cadence, and time to resolution. One number cannot hold all three.

What replaces Erlang C is not one better formula. It is a better operating split.

The simplest modern improvement is structural, not mathematical.

Split the world into two planning domains.

1. Real-time queues

Voice and any chat or messaging work where the customer is actively waiting. Plan these with models that account for customer patience and abandonment. The focus is on wait time distribution and accessibility.

2. Backlog queues

Email, cases, and any async messaging outside active periods. Plan these as inventory. The focus is backlog size, aging, and daily clearance targets.

This split immediately reduces confusion. It also clarifies staffing decisions. Real-time staffing protects the “answer now” promise. Backlog staffing protects the “resolve within window” promise.

Once this split is in place, the center can add a third layer: skill constraints. Capacity then gets allocated by skill and channel, not just by total headcount. This is the point where leaders start seeing stability.

A practical planning stack that holds up under channel mix

A modern workforce approach can stay simple and still be stronger than an Erlang-only plan.• Real-time staffing with patience in mind

Plan voice and live chat with a model that expects some abandonment. Use real patience data, not assumptions. Track abandonment, retries, and channel switching as part of demand. Treat those patterns as leading indicators of under-capacity.

• Backlog staffing based on inventory and aging

Set a daily clearance target that keeps backlog under control. Track backlog age distribution. Make burn-down visible. Run a weekly “backlog health” review the same way a finance team reviews aging receivables.

•Skill coverage as the primary risk

Build coverage by skill and by hour. Identify the scarce skills that can choke the system. Protect them during peaks. Use routing rules that avoid pulling scarce skills into general work unless truly needed.

•As a managed dial

Define safe concurrency by topic, tooling, and agent proficiency. Set triggers for when to raise or lower concurrency. Monitor quality and rework when concurrency changes. Write the rulebook so it is not debated mid-spike.

•Buffers and stress tests instead of single-point certainty

Plan for a base case and a high case. Define what happens when the high case hits. Use a flex pool, overtime triggers, or cross-trained swing shifts. Avoid the brittle plan that assumes “average day” conditions.

This stack is not exotic. It is closer to how operations already run when they are forced to recover from bad weeks. The difference is making it the default design, not the emergency response.

Intraday control matters more than the planning method

Even a strong plan will miss sometimes. Forecast error happens. Absences happen. Outages happen. Viral spikes happen.

Modern channel mix makes intraday control more important because correction is harder. It is not enough to see contact volume. Digital contacts can be misleading. The real dial is effort load and backlog age.

Intraday teams need three dashboards that many centers still lack:

- Effort load by channel and skill, not just contacts.

2. Backlog aging distribution with breach forecasting.

3. Concurrency, quality, and rework signals side by side.

With these, control becomes PROACTIVE.

Without these, control becomes REACTIVE and EXPENSIVE.

What is the end goal here ?

The reason Erlang C fails in modern centers is not that the formula is wrong. It is that the work no longer matches the model assumptions.

Digital channels introduce concurrency, context switching, and backlog aging. Customers abandon and retry. Skill-based routing creates bottlenecks. One service level target cannot govern all channels.

A center can still use Erlang C for pure voice queues where the assumptions are close enough. The failure is using it as the single source of truth across a digital channel mix.

The fix is to split real-time and backlog work, plan each with the right control, respect skill constraints, manage concurrency as a dial, and run buffers and intraday triggers like a real operating system.

That is what stops “the schedule should have worked” from being a weekly ritual.

Why Service Level Planning Collapses in a Chat-and-Message World was originally published in GZP Blog on Medium, where people are continuing the conversation by highlighting and responding to this story.